Framepack AI

Framepack AI is a revolutionary AI video generation model that creates high-quality videos up to 120 seconds long with just 6GB of VRAM through innovative fixed-length context compression technology.

Ver2.0.0-beta.1

7982 tools indexed with fast category, pricing, and feature filters.

Framepack AI is a revolutionary AI video generation model that creates high-quality videos up to 120 seconds long with just 6GB of VRAM through innovative fixed-length context compression technology.

FramePack Studio is an advanced image-to-video generation platform based on frame context packing technology, capable of converting static images into high-quality 30 FPS videos supporting 60+ seconds duration, requiring only 6GB+ GPU memory.

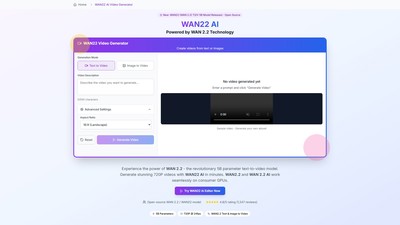

WAN22 AI is a revolutionary text-to-video AI model powered by WAN 2.2 technology, using the 5B parameter TI2V-5B model to generate 720P videos from text or images, with support for consumer GPUs.

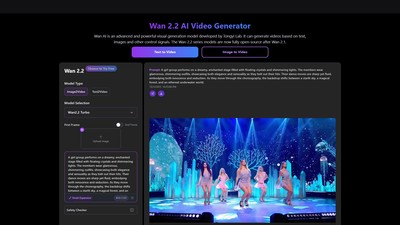

Wan 2.2 is an advanced visual generation model developed by Tongyi Lab that can generate videos based on text, images, and other control signals. The Wan 2.2 series models are fully open-source after Wan 2.1, providing high-quality video generation, text-to-video and image-to-video conversion capabilities, with support for consumer-grade GPUs.

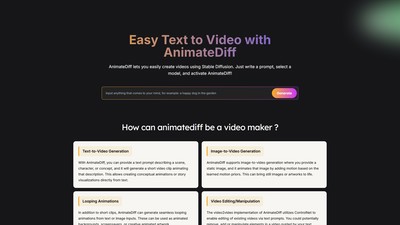

Main features: AnimateDiff is an AI video generation tool supporting text-to-video and image-to-video conversion, transforming text descriptions or static images into animated videos. Core features include: text/image input animation, looping video creation, video editing (via ControlNet), personalized animation (with DreamBooth/LoRA), and creative workflow integration. Target users: artists, animators, game developers, content creators, educators, social media users. Key advantages: plug-and-pl